Not-gate, October surprises and the challenge of 'Between'

Lessons from the community moderation trenches

Editor’s note: Forecast is a new community for discussing the future, organized by topics that matter to people. Whether about news, politics, social issue, sports or pop culture, Forecasters work together to share their knowledge about what's going on today to build a better understanding of what will happen tomorrow. Download the app to join the community.

In this series, we delve into how we think about content moderation in Forecast today, and where we’re trying to get. In part 1, we gave background on Forecast’s current approach to moderation and what makes the problem hard. In this post, we’ll share a few ‘lessons from the trenches’ of prediction market content moderation. Next time we’ll talk about some of the tools we’re rolling out to further empower our users as moderators.

On Forecast, question phrasing is more than semantic - it impacts whether or not people can make forecasts (i.e. one of the main things people do in our app). Often, a question may look reasonable, but hidden details can make it unsettle-able. When moderating questions, we need to ensure we have a contingency plan for every possible outcome, however unlikely - for example, sports event cancellations, business announcements that don’t actually take place, and information going missing when it’s time to settle a question. Below, you’ll find a few lessons learned through the lens of real questions we launched (and then unlaunched) on Forecast:

Not-gate: why questions need mutually exclusive answers

What happens before October…still happens: don’t arbitrarily restrict the timeline for event outcomes

“Between” and its discontents: be really super clear in the question wording

These lessons may seem obvious, but, as you’ll see below, they came with a learning curve. Luckily, the Forecast community has our backs: they caught all these issues before we did! Thanks to their continuous feedback, we continue to evolve the way we moderate questions—and provide more paths to incorporate their feedback.

Lesson 1: Not-Gate

Or: why questions need mutually exclusive answer choices

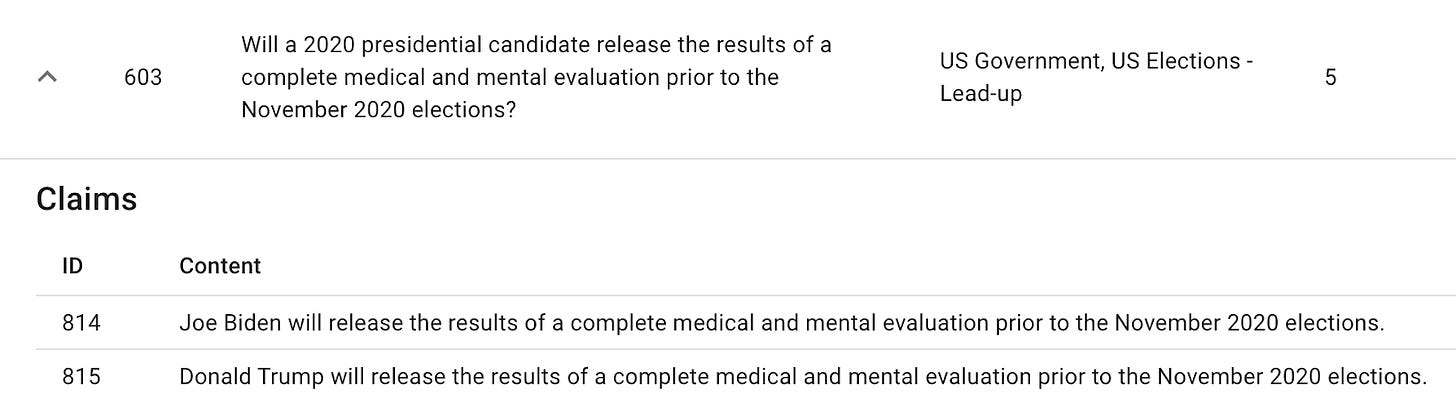

Looking at this question, you may think that we’ve accounted for all possible answer choices. After all, the question asks if a presidential candidate will release a medical and mental evaluation prior to the election, and the answer choices seem to reflect the only possible outcomes. However, we haven’t accounted for both or neither candidates releasing evaluations. Our settlement model requires only one question to be settled “Yes”.

This question came from an earlier version of the app, when we thought of questions as an aggregation of multiple events that aren't tied together, as opposed to resulting in a single outcome. Luckily for us, our users caught on quickly and helped us course correct. Here’s what Joey had to say about this question:

As Joey correctly pointed out, this market was ill-formed and had to be refunded. If we were to write this question now, we would write this question one of two ways:

Option 1 - Split into two questions:

1a. Will Donald Trump release the results of a complete medical and mental evaluation prior to the election?

Answer Choice 1: Yes

Answer Choice 2: No

1b. Will Joe Biden release the results of a complete medical and mental evaluation prior to the election?

Answer Choice 1: Yes

Answer Choice 2: No

Option 2 - Frame it as a Yes/No for either candidate:

2. Will a 2020 presidential candidate release the results of a complete medical and mental evaluation prior to the election?

Answer Choice 1: Yes

Answer Choice 2: No

This question was an excellent lesson in ensuring our markets are properly formed (with only 1 claim that can be settled “Yes”) in the future.

Lesson 2: what happens before October…

Or: don’t arbitrarily restrict the timeline for event outcomes

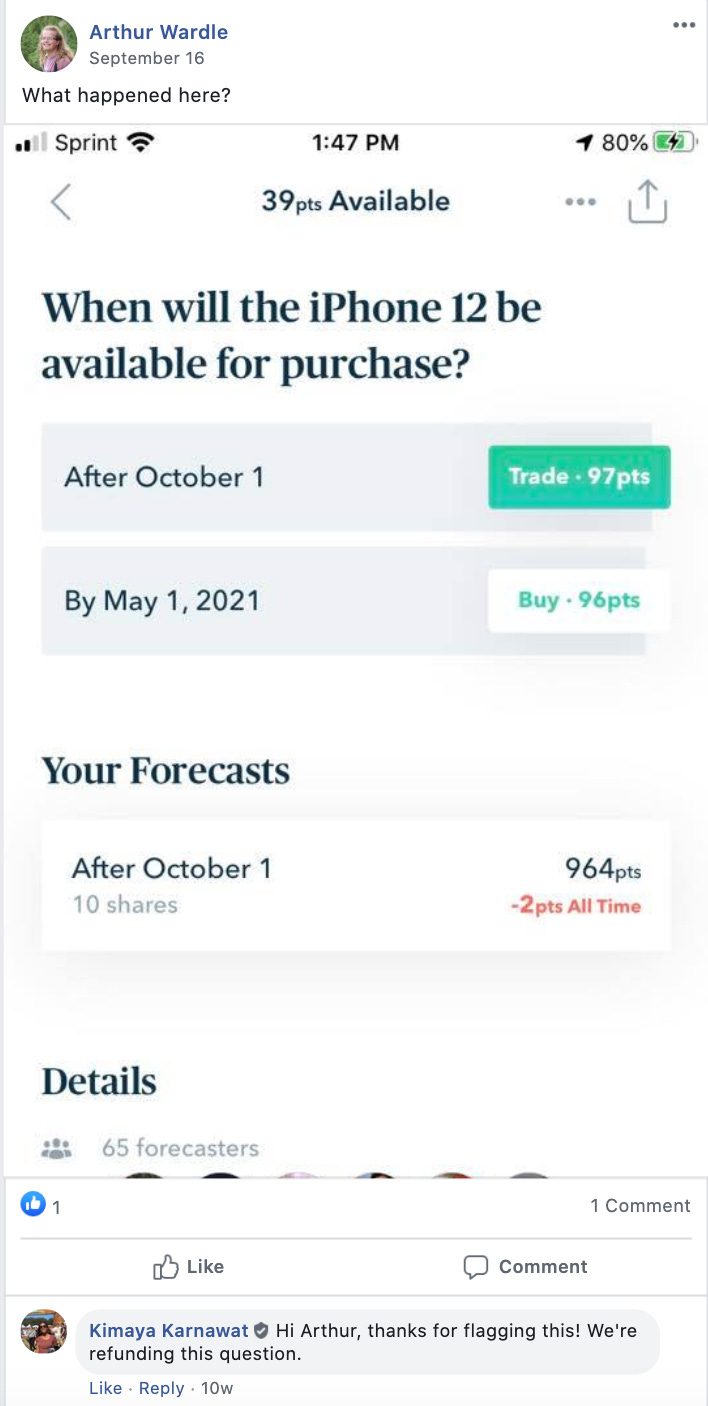

Timelines, at the core of many of our questions, have come with a learning curve. This question is a classic example of what can happen when we don’t include all possible timeframes in which an outcome can occur. There are some key mistakes in this question:

There’s no year associated with October 1. Is it in 2020? 2021? 2025?

Assuming it is October 1, 2020, there is no answer choice to account for the iPhone 12 being released before October 1, 2020.

The choices are not mutually exclusive. Assuming the iPhone 12 is released on October 2, 2020, both answer choices would technically settle as “Yes” - not an acceptable outcome, as we learned in Lesson 1.

We arbitrarily chose the later date of May 1, 2021. If the iPhone was released after May 1, 2021, both of these answer choices would need to settle as “No” - also an unacceptable outcome.

The correct way to write this question would have been to include mutually exclusive timeframes that account for all potential release dates. For example:

Question: When will the iPhone 12 be available for purchase?

Answers:

Before October 1, 2020

Between October 1, 2020 and May 1, 2021 (inclusive)

After May 1, 2021

Thanks to Arthur pointing out the erroneous question, we were able to refund it and re-launch a more correct version of the question that we were able to settle with no issues.

Lesson 3: “Between” and its discontents

Or: being really super clear in the question wording

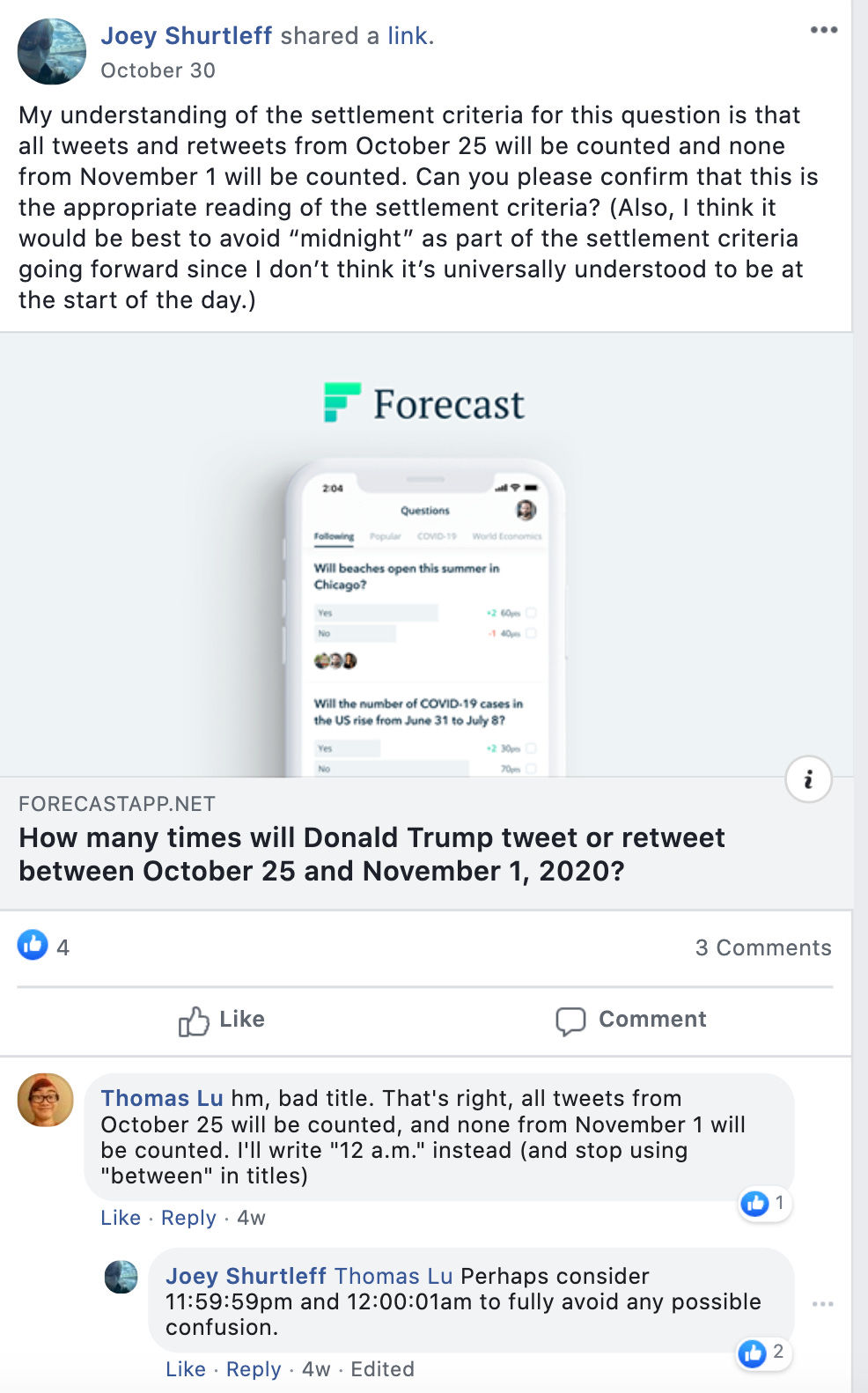

If you look through our settlement criteria on different questions since we launched Forecast, you’ll find that they’ve gotten longer and longer over time. That’s because we’ve learned to be as detailed as possible when it comes to describing the parameters of our questions. Every piece of clarifying information counts - especially to our detail-oriented, data-driven users. In this question, it was unclear whether the Tweet count would include Tweets on 10/25 and 11/1. Thanks to Joey’s request for clarification, we were able to modify the settlement criteria to clarify the bounds of the dates. Since this question, we’ve included timestamps in all of our time frame-dependent settlement criteria.

What have we learned?

When it comes to community moderation, Forecast has both advantages and unique challenges. On the upside, the core Forecast mechanics--in which people are rewarded with points when they write well-supported reasons and incentivized to report ill-formed questions lest they tie up points in a forecast that ultimately won’t deliver a return--lend themselves to self-governance. Even without a self-moderation model, we already see both high quality reasons and a significant percentage of daily users making reports when there’s something wrong with a question (more on that to come). By continuing to empower our users to moderate content on Forecast, we hope to build a community that holds each other and the Forecast team accountable for building a great user experience.